Overview

This workshop is designed for technical professionals and engineering leaders who are interested in exploring approaches on how to mitigate AI risks when working with documents.

Attendees will see how minimizing the probabilistic surface area and treating event sourcing as an overall architectural strategy can turn each document into an auditable, replayable record; bridging AI reasoning with observability and governance.

A Preview:

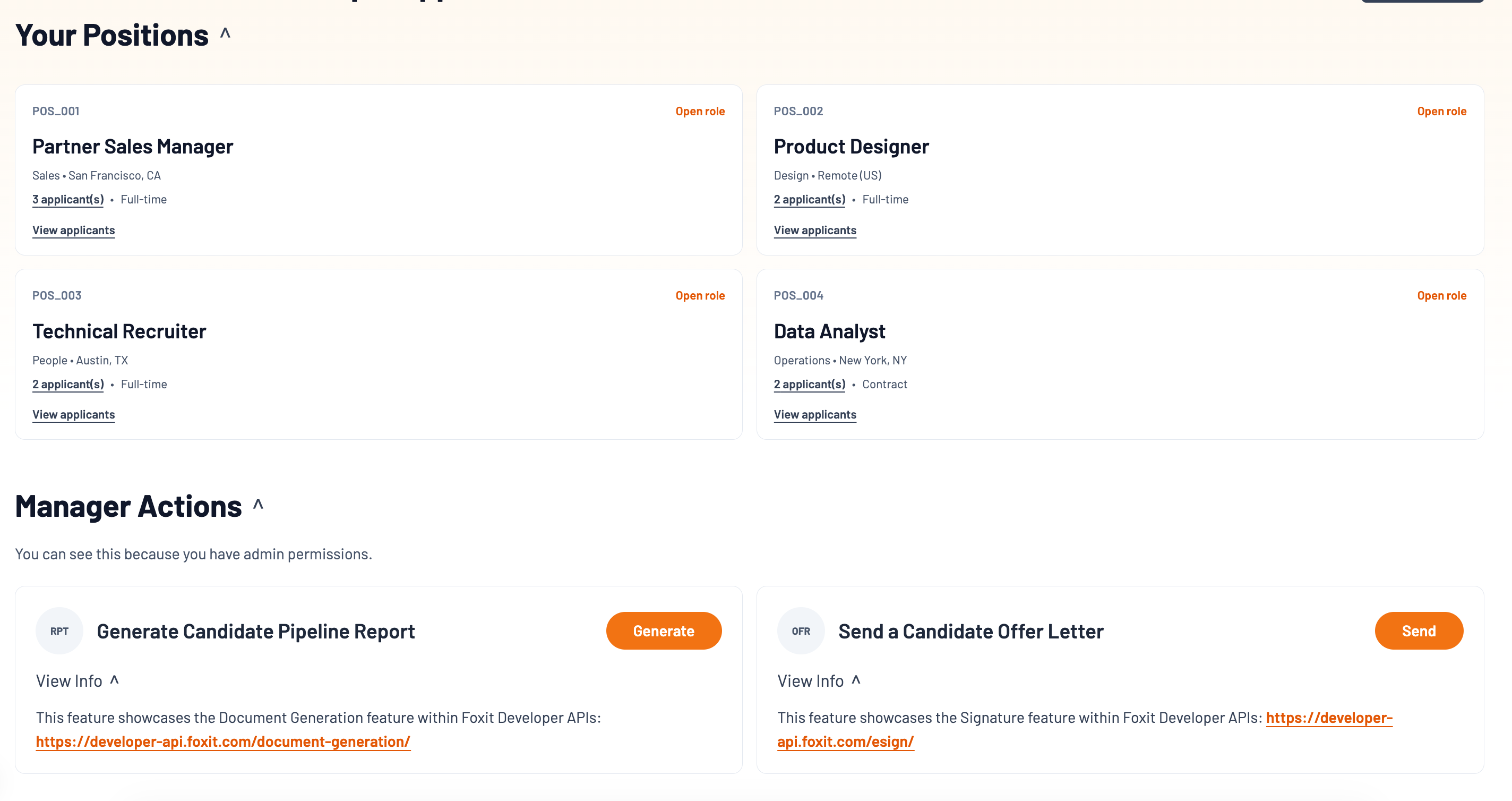

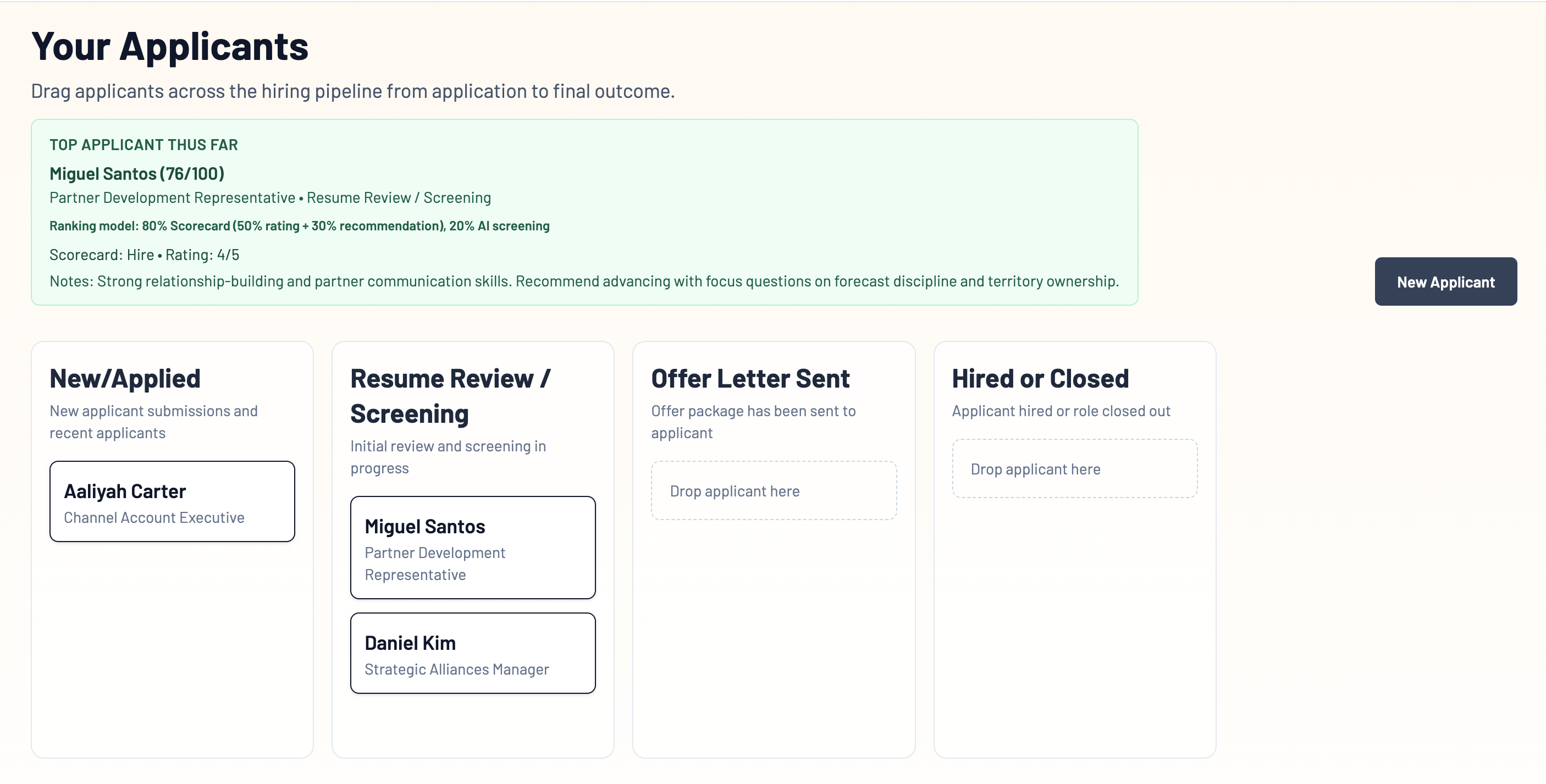

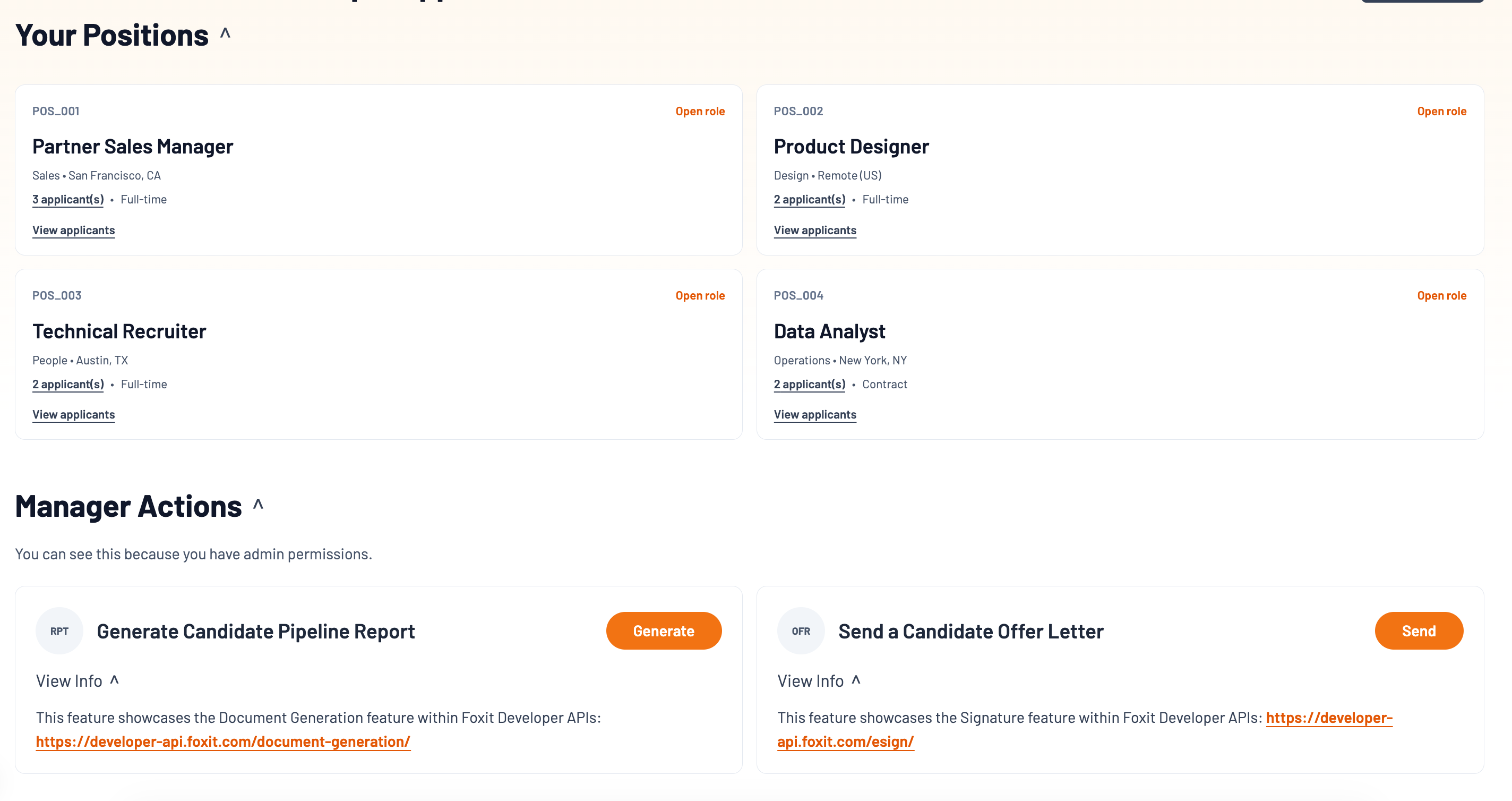

Applicant Tracking System (ATS) - Main Applicants View

ATS System (ATS) - Positions View

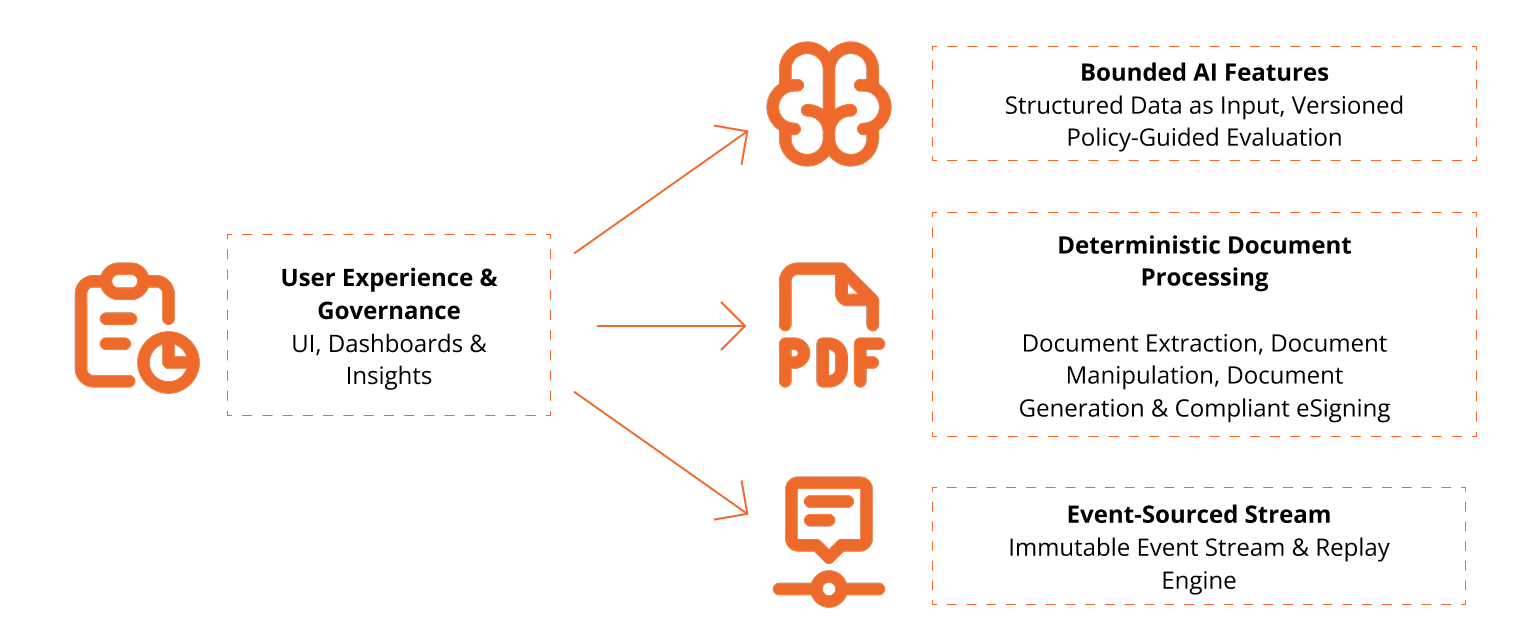

- Source-Driven Auditable AI System Design – Deterministic systems form the backbone, and AI operates within bounded reasoning layers.

Rather than treating AI as an add-on feature, we explore how deterministic APIs such as Foxit's Document Extraction, Document Generation, and eSigning APIs can structure and constrain generative reasoning.

Objectives

By the end of this workshop, you will:

- Leave with a mental model on an EventSourced Deterministic-first pattern and UX Habits that make decisions more observable, replayable, and explainable

- Understand how probabilistic surface area affects reliability in AI systems

- See how Foxit Document Extraction APIs produce structured, deterministic outputs

- Know how an AI evaluation strategy can be deployment within bounded policy constraints

- Generate stable, template-driven decision reports with Foxit Document Generation

- Route documents for approval via eSign APIs to complete the accountability chain

Prerequisites

- Access to a terminal with a Node.JS 24 LTS Environment and internet connectivity

- Visual Studio Code or your preferred IDE

- Basic familiarity with REST/JSON (helpful but not required)

AI-driven systems are increasingly influencing hiring, compliance, legal review, and approvals.

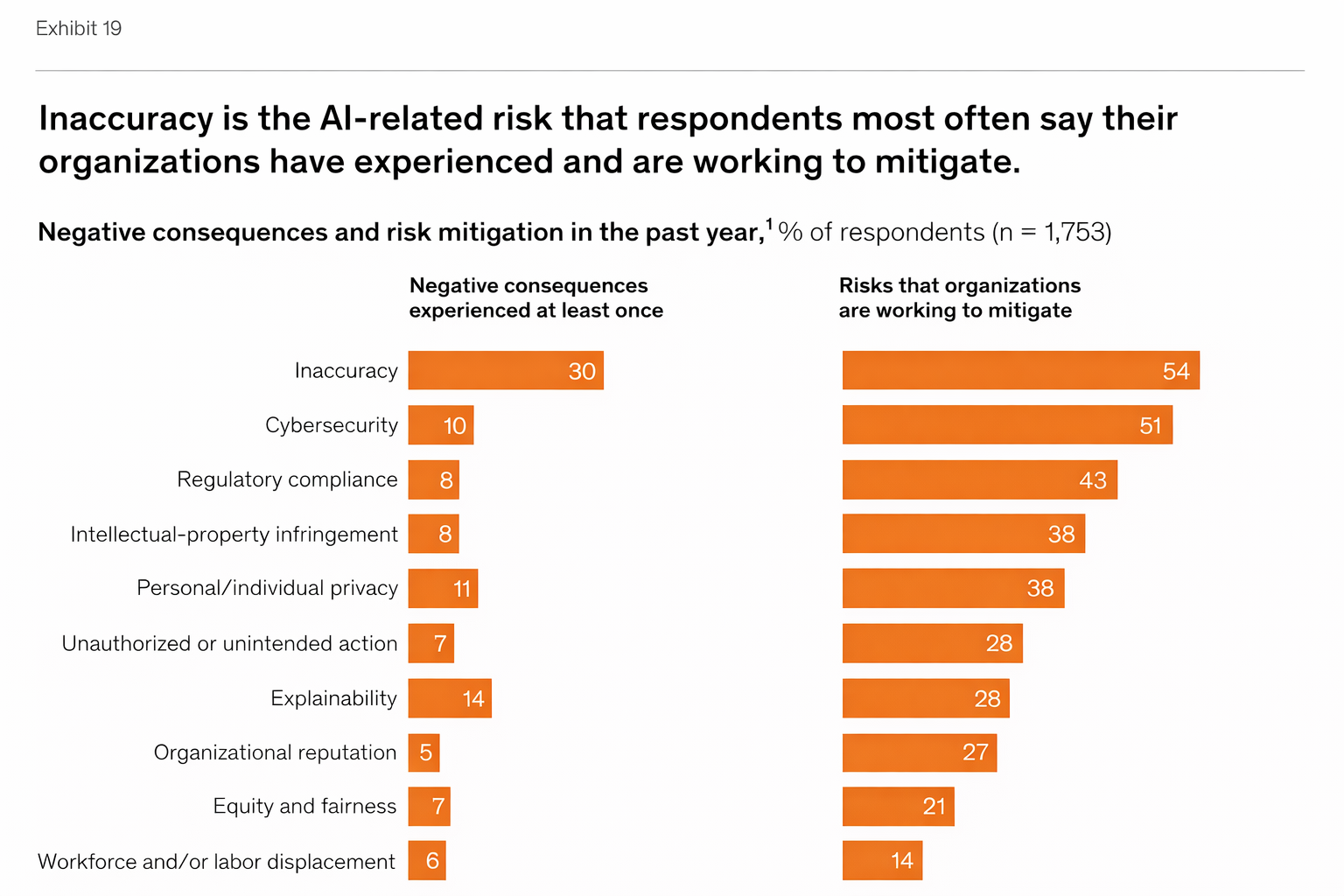

McKinsey Global Survey 2025

Recent survey data shows efforts to mitigate AI risks becoming more common in Enterprise Organizations:

- Inaccuracy is the most commonly experienced AI-related risk.

- Organizations are actively investing in mitigating explainability and compliance risks.

When AI outputs carry consequences, we need systems that are:

- defensible

- replayable

- explainable

1) Install dependencies

Node.js LTS Version 24 is needed in your system.

Please find instructions on how to download and install Node.JS in your system here

2) Download the project's zip File Below

- Now extract the files somewhere in your computer, open in Visual Studio Code or your preferred IDE.

- Create your environment file:

cp .env.example .env - Update

.envwith your values:

Note: Keep credentials server-side only and never commit secrets.FOXIT_DOCGEN_CLIENT_ID=... FOXIT_DOCGEN_CLIENT_SECRET=... FOXIT_ESIGN_API_ACCESS_TOKEN=... AI_PROVIDER_KEY=... - Run the ATS Sample:

npm run dev

3) Create your Foxit Developer API Account

Claim 500 Free Foxit API Developer credits with Foxit Developer APIs

Step 1. Explore the application and notice the "workflow spine":

- Explore the ATS System Positions, Applicants View

- Generate a report with Foxit by heading to Manager Actions -> Generate Candidate Pipeline Report

The Source-Driven AI Pattern

AI emits decisions. The system commits events. The event log is the source of truth.

Rules of the road:

- AI never "owns" workflow state.

- Workflow state is deterministic.

- Every critical transition is an append-only event with versions + timestamps.

- UX renders from event history (timeline, badges, drill-down).

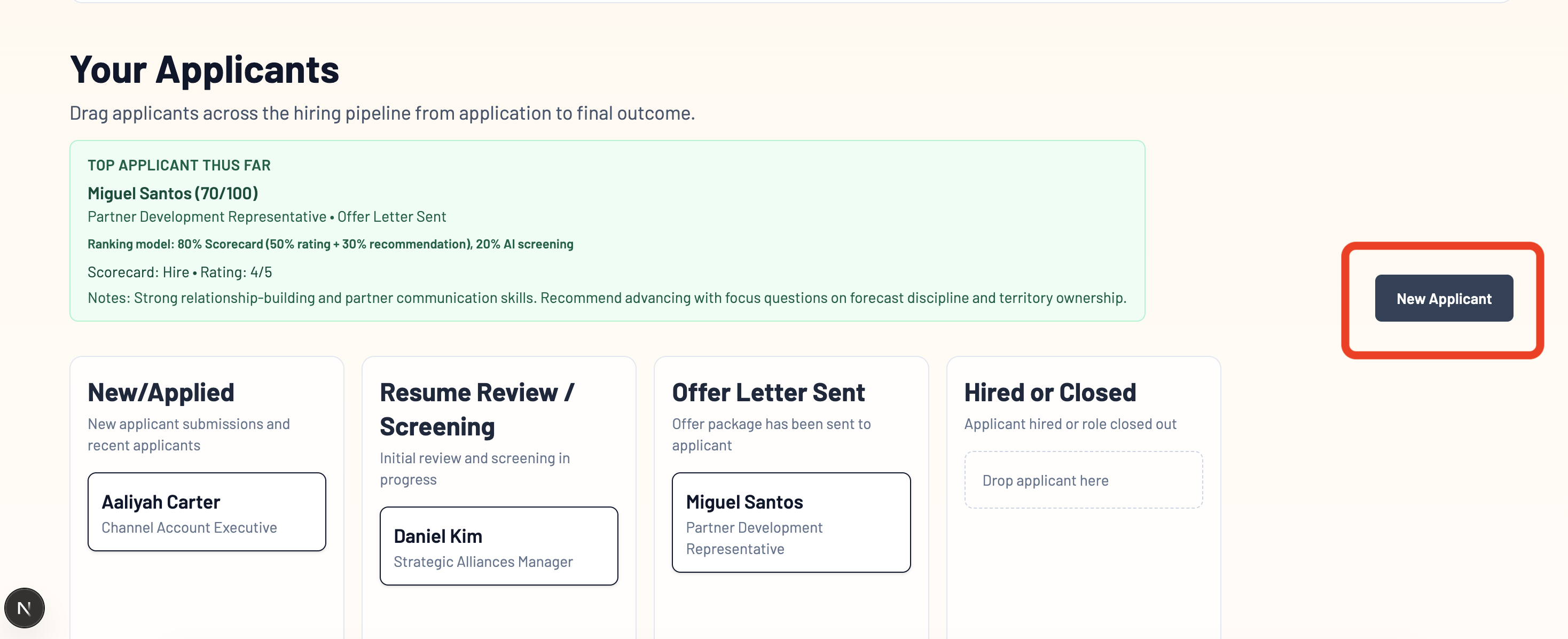

Step 2. Upload a New Applicant Resume

- Upload resume under Applicants → New Resume

- Foxit Extraction API will extract the PDF data into deterministic structure that can be understood semantically.

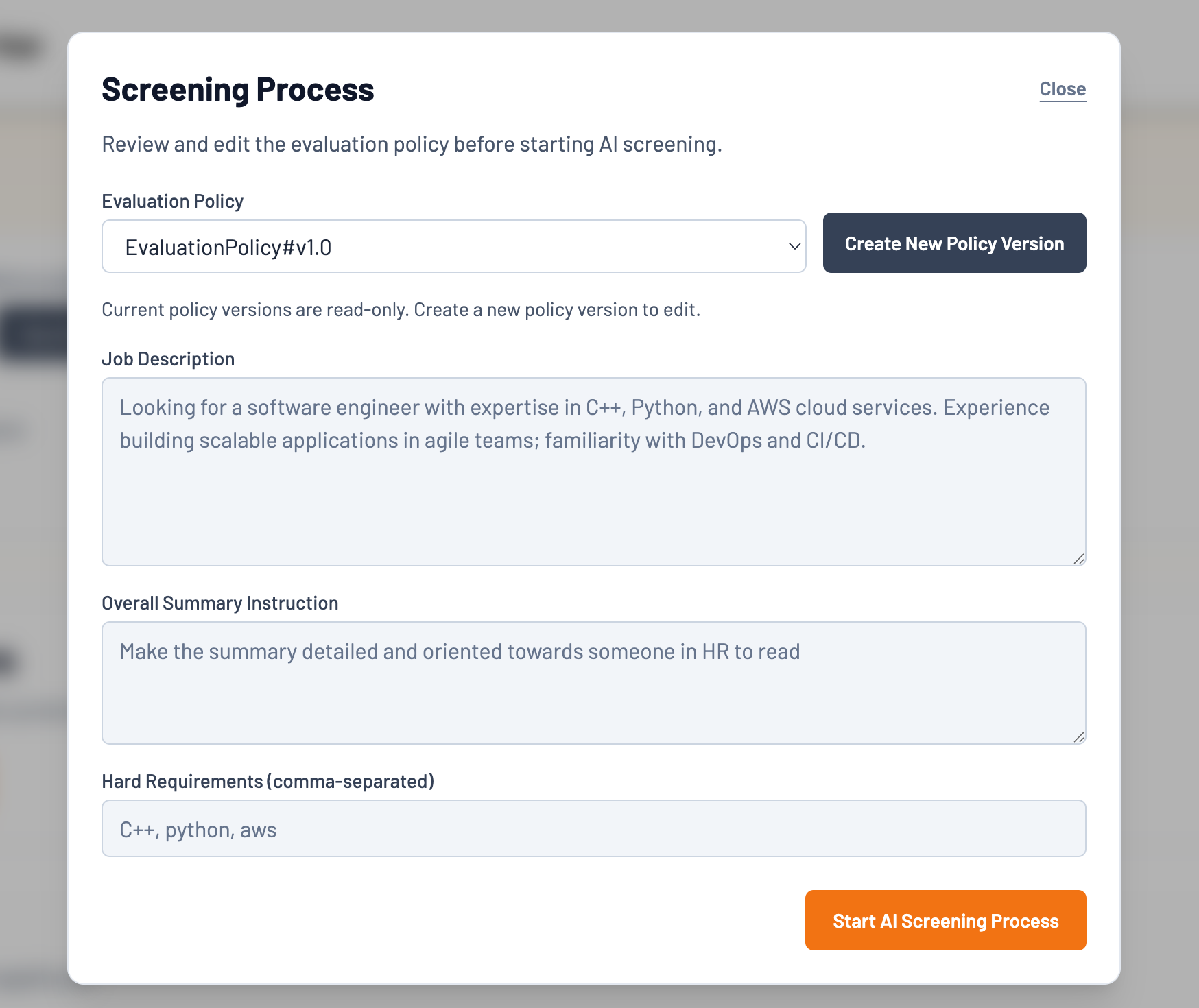

Step 3. Start the AI Screening Process

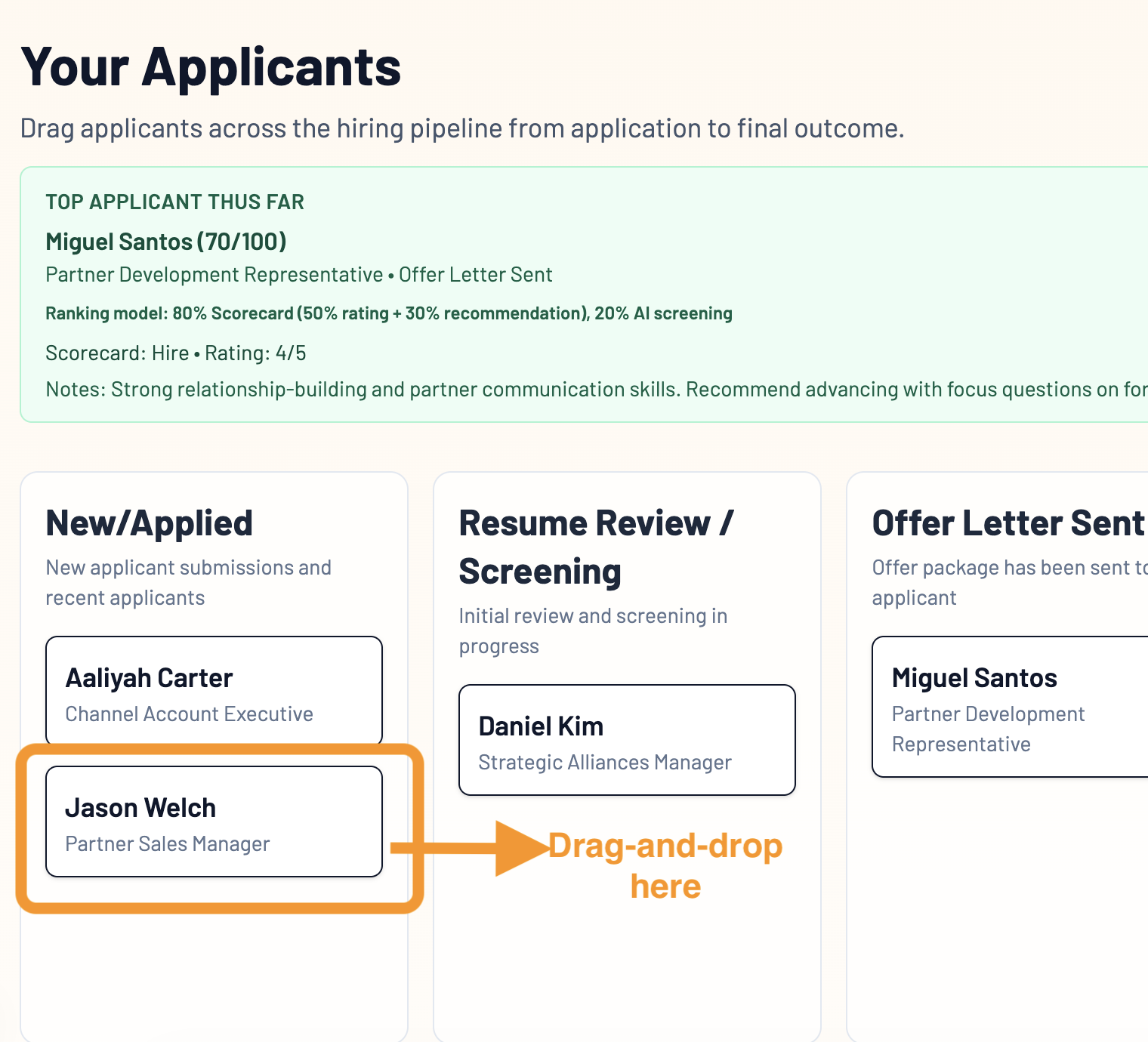

- Drag-and-drop the new applicant into the "Resume Review/Screening" Stage.

- Select the default

EvaluationPolicy_v1.0policy and continue.

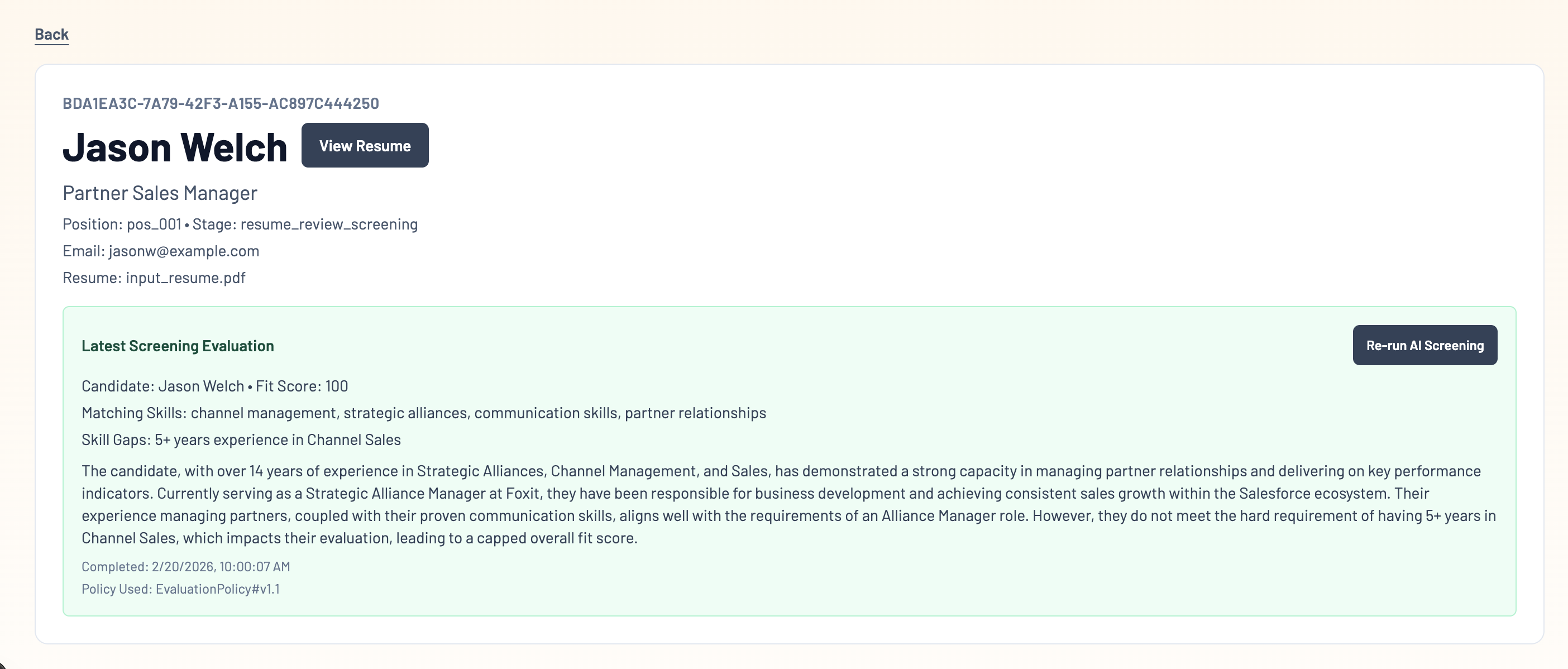

Step 4. Bounded AI Approach: Versioned policy evaluation

Re-run AI Screening, change the policy version:

EvaluationPolicy_v1.0 → EvaluationPolicy_v1.1

Re-run evaluation and see:

- a new screening evaluation highlighted

- a second

ai_evaluatedevent in the timeline

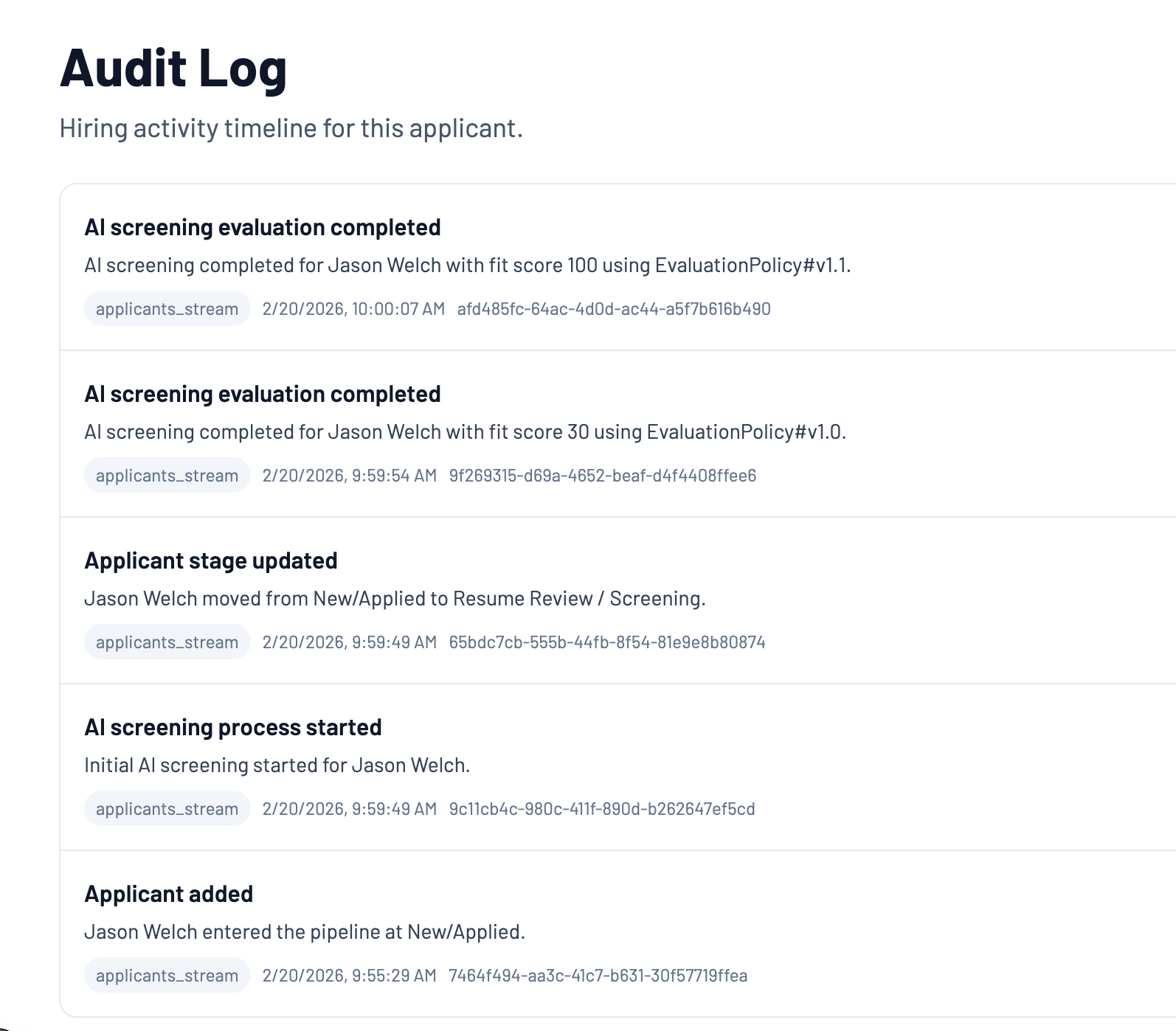

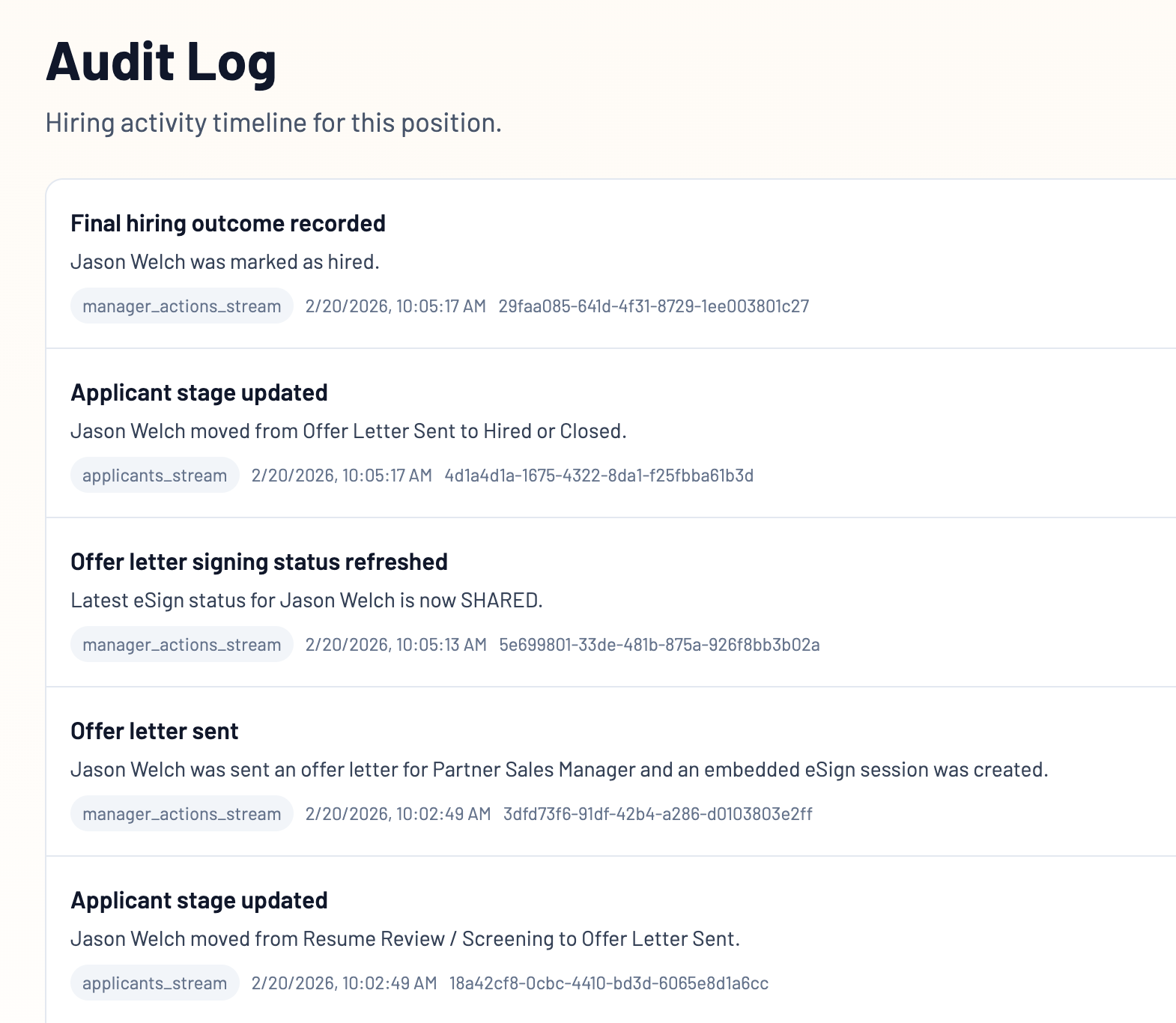

Step 5. The Trust Layer: Event timeline as the UI backbone

The audit timeline on the page highlights that:

- events are immutable + append-only.

- recruiter-friendly phrasing mapped from event types to provide familiarity and trust to the user.

- JSON download is always available for validation.

This is not observability added later. This is the product's source of truth whenever any event takes place in the system.

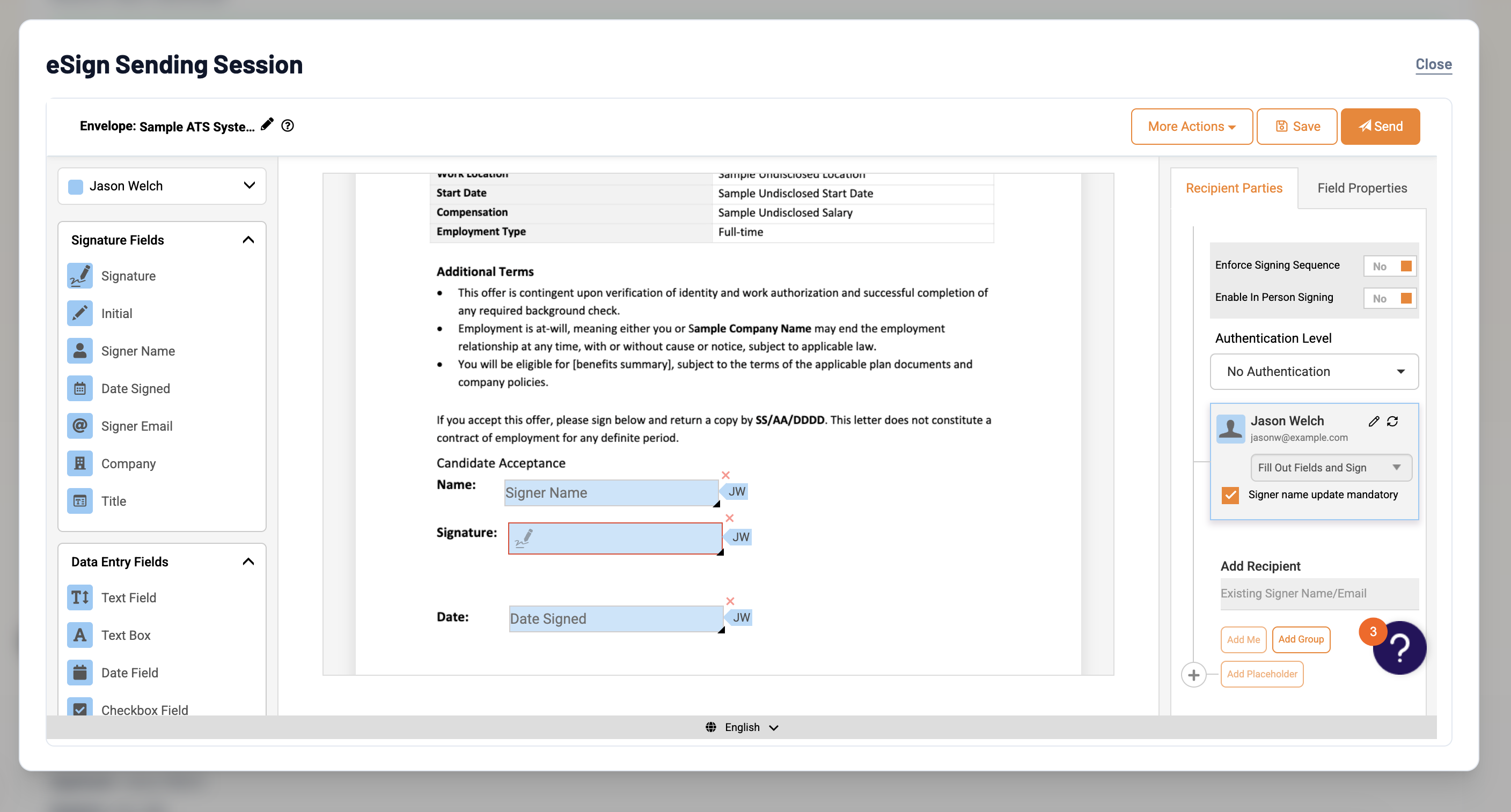

Step 6. Accountability loop: Offer letter flow with Foxit eSign

Move a candidate to the Offer Letter Sent Stage.

- The eSign Sending Session will be shown to HR to proceed with verification and sending for eSignature

- events:

signature_requestedsignature_completed(and eSign status updates)

AI can recommend, but signatures create accountability and further compliance.

Now head back into the Applicants View and move your candidate to Hired.

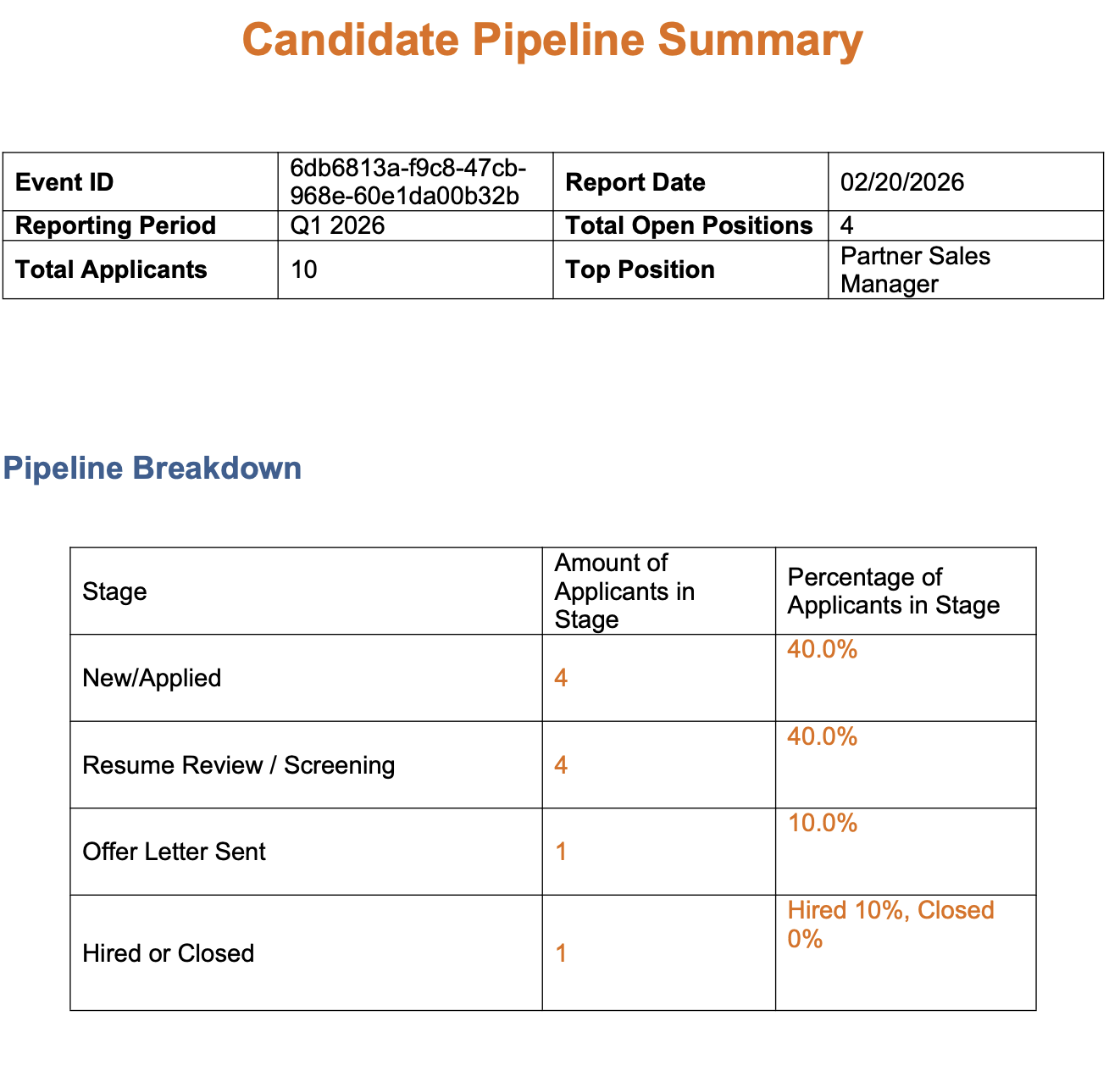

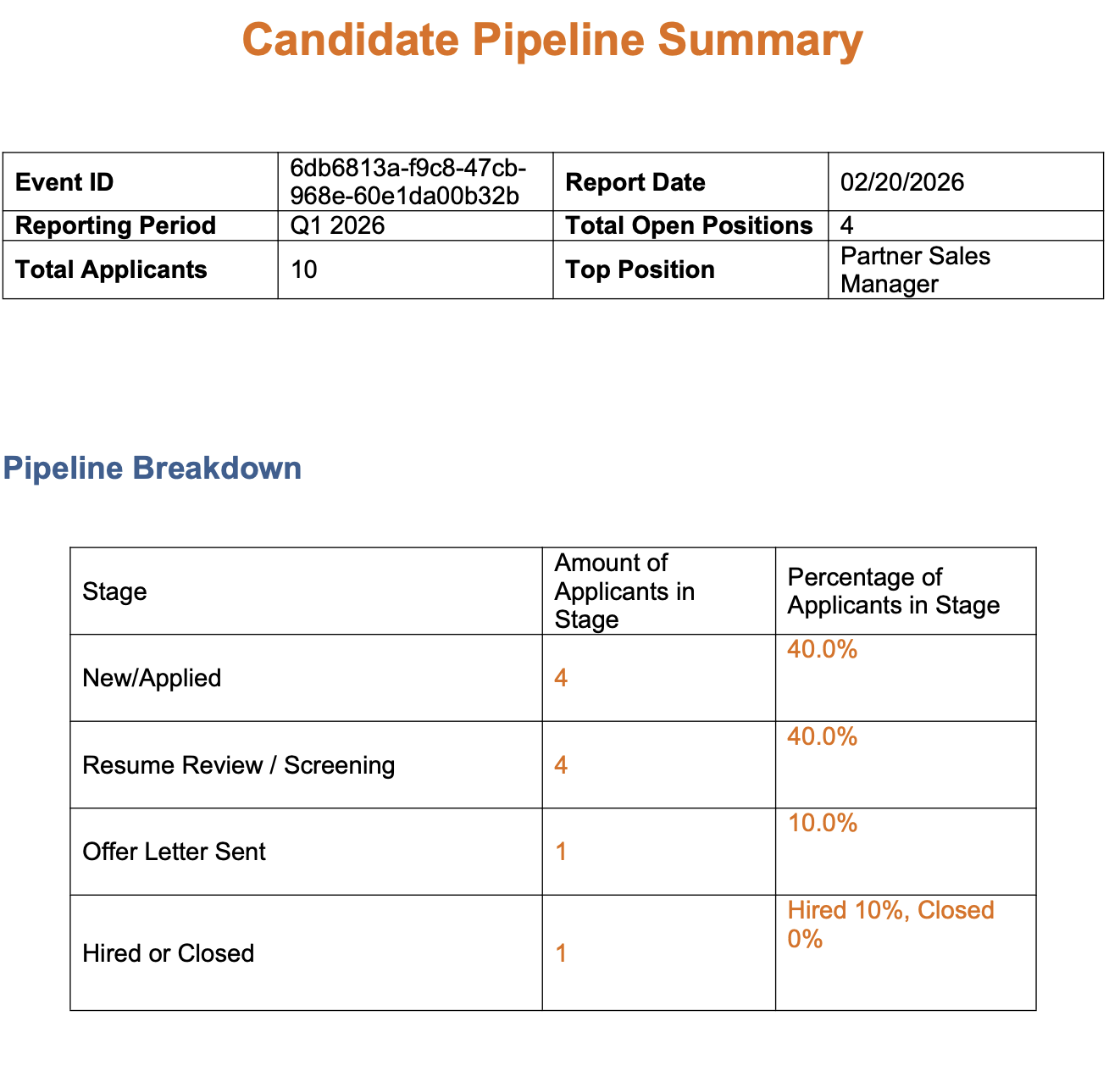

Step 7. Stable artifacts: Candidate pipeline report with Foxit Document Generation

From Manager Actions, run Generate Candidate Pipeline Report once more.

- A unique event is also emitted for each report generated (e.g.,

CandidatePipelineReportGenerated)

- Foxit Document Generation then turns structured workflow state into a stable artifact that stays consistent across runs.

Key takeaways

- Deterministic workflow state + events make AI systems auditable and replayable.

- Policy-bounded AI makes decisions explainable and versionable.

- Foxit APIs provide reliable document and signing primitives:

- Extraction for stable inputs

- Document Generation for stable artifacts

- eSign for accountable approvals

A Call to action!

Clone the repo and adapt the pattern to your own workflow:

- procurement approvals

- compliance reviews

- contract intake

- claim processing

- onboarding / HR forms